Launch

Ljubljana Realtime event discovery engine uses Foursquare trending venues and geo-tagged posts from Twitter, Instagram and Flickr to discover what's happening in real life. At least 6 people checked-in on Foursquare or two different people tweeting or posting photos in a single hour could mean something is going on. These events are posted to Twitter and Facebook, with links to the posts. A few versions of this algorithm were already deployed, each one solving new problems, resulting in a few micro Build - Measure - Learn cycles in a single month.

Iteration 1: Foursquare, no duplicates

The first version of the stream (bot) was a simple one, at that point it was meant to work as promotion for the map. The only thing it knew how to do was to wait a few hours until it posted the same thing again. I think Foursquare checkins are alive for three hours, so if a trending venue was still trending after that time, new people had to checkin and the venue was still buzzing.

Problem: Plain, no real added value.

Iteration 2: Adding activity from other sources

When we were trying to make some space on the crowded map, we started grouping posts from Twitter and Instagram by the nearest Foursquare venue, which meant having less boxes on the screen. This turned out to be quite a complex thing to do properly, but it was worth the effort. On only a few occasions, one venue would have multiple posts in a single hour, and in most cases, that meant something was happening there. This provided another very interesting potential for the activity stream. Actually, it made the stream bigger than the map could ever be.

(I love it when such things happen, when you are trying to solve a problem, and it turns out there is much more hidden behind the resolution.)

Groupping posts by a venue. Did Ljubljana Realtime just discover an athletic meeting taking place?

The next problem: Activity in some venues, specially generic ones such as "Ljubljana" would trigger the stream almost every day. Similarly, some large venues, such as supermarkets, would be trending too many times on Foursquare.

Iteration 3: Balancing the posts

The algorithm needed an update, which would lower the amount of times when a venue would be recognized as an event, either on Foursquare or on other channels. At first I though about an upgrade which would set the amount of people or tweets needed to trigger the "event discovered" action for a specific venue. This would enable us to reduce the importance of some venues, but it would also require manual work. Luckily, we came up with another brilliant idea: the more times a venue is trending, the harder it is for it to be trending again, at least for the next few days. Automatic balancing.

Venues with the most discovered events. Generic ones, besides massive places, such as train stations, cinemas, squares and shopping centers are too dominating.

The next problem: At this point, we have launched other test instances of Ljubljana Realtime (Maribor, Zagreb and Zurich), to see how the system behaves in other environments. Some cities are bigger, some are smaller, which means they produce different amount of activity. Besides, different services are used differently in different cultures.

Iteration 4: Supporting local instances

Foursquare is big in Croatia (Zagreb), but not so much in Switzerland (Zurich), which means Zagreb Realtime's stream had a lot of Foursquare trending posts, while Zurich's had a lot of "Increased activity on Twitter and Instagram" posts. It was obvious that local instances needed different algorithms. While having an option to set the amounts which would trigger the post on a specific venue would be too much to moderate, having the same logic on a specific region could work. And it does. Zagreb now needs more people checked-in on Foursquare, while Zurich needs more unique people tweeting or sharing photos.

Number of discovered events by type (Foursquare vs. Twitter + Instagram) on each day. Foursquare trending venues are dominating Zagreb, while social streams are dominating Zurich Realtime.

The next problem: The basic algorithm requires two different people to tweet/post from the same location in one hour. In case of Zurich, this amount was set to three, but it turns out this situation happens rarely, around 10 times fewer than with two people, or only two to three times a day. Obviously not enough.

Iteration 5: Improving the "increased activity" weight

You can only have a whole amount of people tweeting in the past hour. Two or three. In our case, we needed something in the range of 2 1/2. The modified solution adds the number of posts divided by ten to the number of users, which means that currently, at least two people making at least three posts in an hour will determine a trending event in Zurich. This is not a perfect solution from the event discovery view, but it does what urgently needed to be done: prevent having too many tweets in the stream.

The next problem: we currently have four Twitter accounts that tweet events for these four cities. Our target was for each of them to make around 10 - 15 tweets a day, which seems like a number that is not spam. But how can a person see which of these events is THE event?

Iteration 6: Going super venue level 2

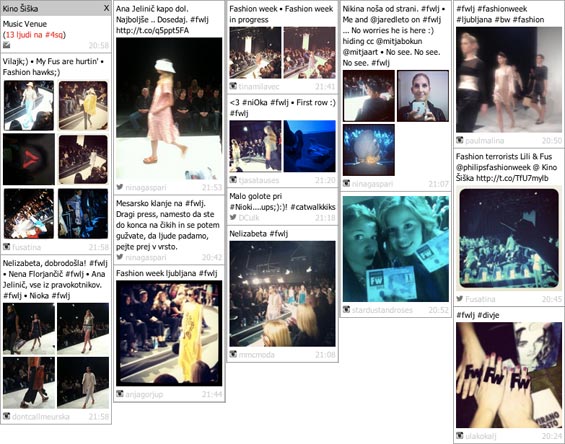

The latest version of the algorithm now recognizes two levels of events. An event (mostly 6 people on Foursquare, mostly 2 different people tweeting), and an outstanding event (around 12 people on Foursquare, around 4 people tweeting). Our goal was to make this super event happen only once a few days, on rare occasions two times per day, and it has already happened a few times.

Sometimes super events happen, with tens of posts in a single hour, such as the one for Philips Fashion week. These events definitely require more exposure.

The next iterations

At this point, I'm very satisfied with how the algorithm works, even though a few other modifications need to be done (specially to support different days of week specifics and behavior). By measuring what is happening, learning from that information and building the next releases based on that knowledge, the activity stream logic has come a long way from the initial version. Measuring is crucial, and rarely we have went to such extent to enable this in the widest way possible (e.g. the update to balancing the posts based on the previous events would be trivial by itself, but we wanted to log things that would happen but didn't happen, besides things that actually happened).

These cycles of Build - Measure - Learn can be a lot hard work, but they provide great results, which are also very fun and rewarding. Some people simply need to see how deep the rabbit hole is. Do you have any other interesting cases or experience with this approach?